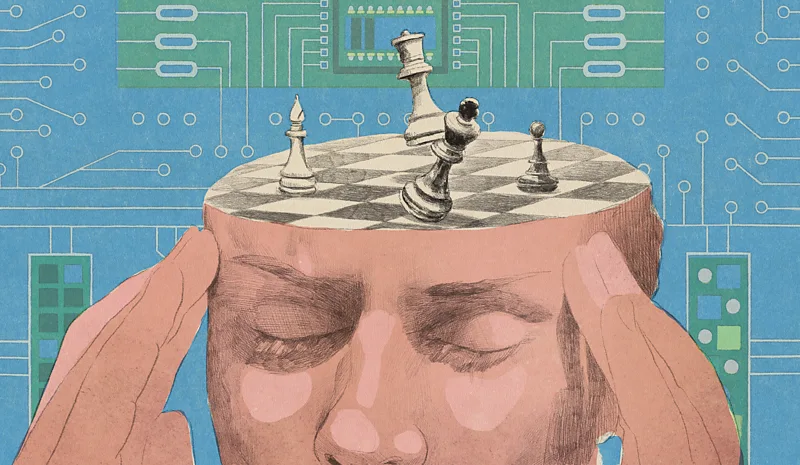

In March, a man called Noland Arbaugh demonstrated that he could play chess using only his mind. After living with paralysis for eight years, he had gained the ability to perform tasks previously inaccessible to him, thanks to a brain implant designed by Neuralink, a company founded by Elon Musk.

“It just became intuitive for me to imagine the cursor moving,” Arbaugh said in a live stream. “I just stare somewhere on the screen, and it would move where I wanted it to.”

Arbaugh’s description alludes to a sense of his own agency: he was suggesting he was responsible for moving the chess piece. However, was it him or the implant that performed the actions?

As a philosopher of mind and AI ethicist, I am fascinated by this question. Brain-computer interface (BCI) technologies like Neuralink symbolise a new era in the intertwining of the human brain and machines, asking us to reconsider our intuitions about identity, the self and personal responsibility. In the near-term, the technology promises many benefits for people like Arbaugh, but the applications could go further. The company’s long-term vision is to make such implants available to the general population to augment and enhance their abilities too. If a machine can perform acts once reserved for the cerebral matter inside our skulls, should it be considered an extension of the human mind, or something separate?

The extended mind

For decades, philosophers have debated the borders of personhood: where does our mind end, and the external world begin? On a simple level, you might assume that our minds rest within our brains and bodies. However, some philosophers have proposed that it’s more complicated than that.

In 1998, the philosophers David Chalmers and Andy Clarke presented the “extended mind” hypothesis, suggesting that technology could become part of us. In philosophical language, the pair proposed an active externalism, a view in which humans can delegate facets of their thought processes to external artefacts, thereby integrating these artefacts into the human mind itself. This was before the smartphone, but it predicted the way that we now offload cognitive tasks to our devices, from wayfinding to memory.

As a thought experiment, Chalmers and Clarke also imagined a scenario “in the cyberpunk future” where somebody with a brain implant manipulated objects on a screen – much like Arbaugh has just done.

To play chess, Arbaugh imagines what he wants, like moving a pawn or bishop. And his implant, in this case Neuralink’s N1, picks up neural patterns of his intent, before decoding, processing, and executing actions.

Emmanuel LafontDecades ago, philosophers imagined futuristic scenarios where implants in the brain extended the mind. Now it’s here (Credit: Emmanuel Lafont)

Emmanuel LafontDecades ago, philosophers imagined futuristic scenarios where implants in the brain extended the mind. Now it’s here (Credit: Emmanuel Lafont)

So, what should we make of this philosophically now that it’s actually happened? Is Arbaugh’s implant part of his mind, entwined with his intentions? If it’s not, then it poses thorny questions about whether he has true ownership of his actions.

To understand why, let’s consider a conceptual distinction: happenings and doings. Happenings encapsulate the entirety of our mental processes, such as our thoughts, beliefs, desires, imaginations, contemplations, and intentions. Doings are happenings that are acted upon, such as the finger movements you’re using to scroll down this article right now.

Usually, the gap between happenings and doings does not exist. For instance, let’s take the case of a hypothetical woman, Nora – not a BCI-integrated person – playing chess. She can form an intention through regulating her happenings to move the pawn to d3, and simply does so by moving her hand. In Nora’s case, the intention and the doing are inseparable; she can attribute the action of moving the pawn to herself.

For Arbaugh, however, he must imagine his intent, and it’s the implant that performs the action in the external world. Here, happenings and doings are separate.

This raises some serious concerns, such as whether a person using a brain implant to augment their abilities can gain executive control over their BCI-integrated actions. While human brains and bodies already produce plenty of involuntary actions, from sneezes to clumsiness to pupil dilation, could implant-controlled actions feel alien? Might the implant seem like a parasitic intruder gnawing away at the sanctity of a person’s volition?

I call this problem the contemplation conundrum. In Arbaugh’s case, he skips crucial stages of the causal chain, such as the movement of his hand that instantiates his chess move. What happens if Arbaugh first thinks of moving his pawn to d3 but, within a fraction of a second, changes his mind and realises he would rather move it to d4? Or what if he is running through possibilities in his imagination, and the implant mistakenly interprets one as an intention?

The stakes are low on a chess board, but if these implants became more common, the question of personal responsibility becomes more fraught. What if, for example, bodily harm to another person was caused by an implant-controlled action?

And this is not the only ethical problem these technologies raise. Perfunctory commercialisation without fully resolving the contemplation conundrum and other issues could pave the way to a dystopia reminiscent of science-fiction tales. William Gibson’s novel Neuromancer, for example, highlighted how implants could lead to loss of identity, manipulation, and an erosion of privacy of thought.

Emmanuel LafontIf an implant performs an involuntary action, should it be considered separate from its owner? (Credit: Emmanuel Lafont)

Emmanuel LafontIf an implant performs an involuntary action, should it be considered separate from its owner? (Credit: Emmanuel Lafont)

The crucial question in the contemplation conundrum is when does a “happening of imagination” turn to “intentional imagination to act”? When I apply my imagination to contemplate what words to use in this sentence, this is itself an intentional process. The imagination directed towards action – typing the words – is also intentional.

In terms of neuroscience, differentiating between imagination and intention is nearly impossible. A study conducted in 2012 by one group of neuroscientists concluded that there are no neural events that qualify as “intentions to act”. Without the capability to recognise neural patterns that mark this transition in someone like Arbaugh, it could be unclear which imagined scenario is the cause for effect in the physical world. This allows partial responsibility and ownership of action to fall on the implant, and questioning again whether the actions are truly his, and whether they are a part of his personhood?

However, now that Chalmers and Clarke’s extended mind thought experiment has manifested into reality, I propose revisiting their foundational ideas as one method to bridge the divide between happenings and doings in people with brain implants. The adoption of the extended mind hypothesis would allow someone like Arbaugh to hold responsibility over their actions rather than divide it with the implant. This cognitive view suggests that to experience something as one’s own, one must think about it as one’s own. In other words, they must think of the implant as a part of their self-identity and within the borders of their inner life. If so, a sense of agency, ownership, and responsibility can follow.

Brain implants like Arbaugh’s have undoubtedly opened a new door for philosophical discussions about the border between mind and machine. The debate over action and agency has traditionally circled around the skin and skull boundary of identity. However, with brain implants, this boundary has become malleable – and that means the self may extend further into technology than ever before. Or as Chalmers and Clarke observed: “Once the hegemony of skin and skull is usurped, we may be able to see ourselves more truly as creatures of the world.”

*Dvija Mehta is an AI ethics researcher and philosopher of mind at the Leverhulme Centre for the Future of Intelligence, University of Cambridge.

1 comment

Keep functioning ,fantastic job!